Newsrooms big and small are embracing AI to translate, script and fact-check in real time. In a Knight Center round table, five top journalists examined its visible and hidden risks.

“AI showed up kicking the door in.” This was part of the opening remarks by moderator Laís Martins at a roundtable discussion organized by the Knight Center for Journalism in the Americas on the use of artificial intelligence in newsrooms in Brazil.

“Our industry had barely recovered from the social media crisis, from figuring out how to deal with these new digital spaces when it was hit by this new major challenge,” said Martins, a reporter at The Intercept Brasil and an AI Accountability Network fellow at the Pulitzer Center.

She was joined by Daniela Braga, artificial intelligence editor at Folha de S.Paulo; Tatiana Dias, investigative journalist; Jade Drummond, executive director at Núcleo Jornalismo; and Marcela Duarte, innovation director at Aos Fatos.

The webinar was attended by over 200 people, including many university students from across Brazil.

Dias, a fellow with the Texas-based nonprofit Tech Policy Press and seasoned reporter covering technology in Brazil, said she has been both enthusiastic and critical in her coverage of artificial intelligence.

In April, she will be part of a group launching Ctrl + Z, an organization that will advocate for digital rights through investigation and litigation. She underscored that in a typical university classroom, virtually all students regularly use ChatGPT, and according to the Reuters Institute’s Digital News Report for 2025, 9% of Brazilians already use AI chatbots to obtain their news.

“We’re no longer debating whether we’re going to use it or whether we should use it,” she said. “That ship has already sailed.”

For Dias, understanding the risks of technology is critical because the industry promotes urgency in getting on board with its products, but she said most of that is driven by the companies’ own interests.

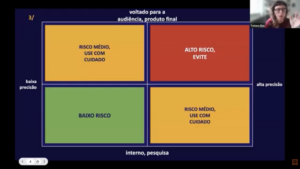

“We do not have to have a luddite posture of destroying these new technologies,” she said. “But we must use it in a critical and thoughtful process, without introducing sensitive information into these systems.” She presented a series of decision-making matrices inspired by frameworks created by the Pulitzer Center and Reporters Without Borders on the ethical uses of AI in the newsroom.

“In this diagram, we avoided things that require high accuracy and are audience-facing because they were very high risk,” said Tatiana Dias, veteran technology reporter in Brazil.

“We have to consider whether this content is intended for an audience, whether it will be a final product,” she said. “Is it a text that people will read, an Instagram carousel that people will view, something people will have access to or am I using it for internal research, to analyze a 900-page document? Why am I using this AI? This will determine the risk level of using the tool.”

Dias said a tool that can create images presented to the audience or that create a transcript for internal use are typically medium risk, meaning they can be used but with caution.

“I’ve had countless instances of using AI to summarize an interview, and the AI simply invents a quote. It rewrites what the person said in a different way and completely changes the meaning,” she said.

She added that it can be very useful for analyzing large volumes of data, such as for transcribing several hours of video recordings from YouTube and finding the location of a quote, even though it may have low accuracy.

“I recommend it,” she said. “It’s for internal use.”

Dias ended her presentation warning that the AI industry wants to commodify the thought process.

“We need to defend our creativity, our humanity and our ability to improvise—things that machines can’t do,” she said. “I think that if we lose our touch there, we’ll end up with a polarized mindset that turns our ideas into a business model.”

Jade Drummond, executive director at the tech news website Núcleo Jornalismo, followed by presenting an outlet-based approach to the use of AI. Núcleo’s staff focuses on in-depth reporting and building applications and tools to monitor and track public data.

The newsroom’s use of AI in the editorial process is dictated by its internal policy. “The main point of this policy is that we view artificial intelligence as a tool that will not replace humans. It should be used as a tool that can enhance our work, either enhancing our investigations and the digital products we develop.”

Drummond cited the necessity of the reporter's style and voice being seen in their stories. But the outlet does not shy away from offering AI-based tools, such as Nuclito, which helps users search Núcleo’s content, and Legislatech, which helps track public documents and legislation.

Disclosing and being transparent about the use of AI is key. A majority of audiences find it important to disclose that you used AI for images, audio, video, editing photos, writing the text or anything else, she said.

From a small to a large outlet, the next panelist was Daniela Braga, AI editor at Folha de São Paulo, one of the biggest newspapers in Latin America. She presented a growing portfolio the outlet is developing for use by its more than 300 journalists. That includes a translator and a transcription service, a headline generator and a short video scriptwriter, which is especially pressing with video’s increasing importance.

“Journalists can record themselves with the help of AI, which will reduce the time it takes them to produce a script based on something they’ve already created,” she explained. “And for our readers, we launched a pilot project to create recipes using AI. It was the first product we released. Later, we partnered with a hospital to create a chatbot for information on breast and prostate cancer using the hospital’s own database.”

The heavily used Folha Manual, akin to the AP Stylebook, was used to create a copy editing app that helps reporters standardize and proofread the text. “The manual is a well-known guide to Brazilian journalism, and it’s been our bible that we’ve always followed. So we took the latest edition of the manual and used it to train our AI to correct texts according to what’s written in the manual,” Braga said. “So, does Folha use Roman numerals or not? No, it doesn’t. So, the AI will make this correction, but it will never do so automatically. It always requires user action.”

Fact-checking and AI was also discussed at the roundtable. Marcela Duarte, innovation director at the fact-checking site Aos Fatos, said it cannot afford not to use AI. “We’ve seen productivity gains, so we can analyze large databases that we couldn’t do manually, or that I couldn’t do alone or with a small team—tasks that would take a very long time, perhaps a Herculean year-long effort,” she said.

Amid an incoming - and polarizing - election, Aos Fatos is testing an AI tool called BuscaFatos that will help reporters fact-check in real time. “We have Escriba, which provides transcripts for our newsroom and for some organizations. Escriba transcribes the live stream or debate in real time, and this new app [BuscaFatos] will match the spoken phrases against the AosFatos database, immediately showing the reporter accessing it whether a particular phrase has already been fact-checked by Aos Fatos and indicating whether it is false or true,” Duarte said.

For her, these tools are important because misinformation spreads much faster and further, and fact-checking comes later trying to address that problem. “Speed, in this case, is essential. So, the faster we can get to debunking certain information that was said in a debate, the better.”

Watch this discussion and the entire webinar for free on the Knight Center’s YouTube page.