Media outlets and content creators are turning to "algospeak"—the alteration of words or the use of euphemisms—to maintain visibility and evade algorithmic restrictions.

When the news portal En Blanco y Negro, based in the city of Chihuahua in northern Mexico, set out to increase its social media audience, it encountered the fact that major digital platforms frequently restricted the visibility of topics such as crime and insecurity.

The media outlet's team, led by its then-news director Roberto Álvarez, noticed that posts containing words such as “executed,” “drugs” or “narco” received less traffic—or were even flagged for alleged violations of content guidelines.

It was then that they began to adopt a strategy that influencers and content creators use to outsmart social media algorithms: inventing or altering words.

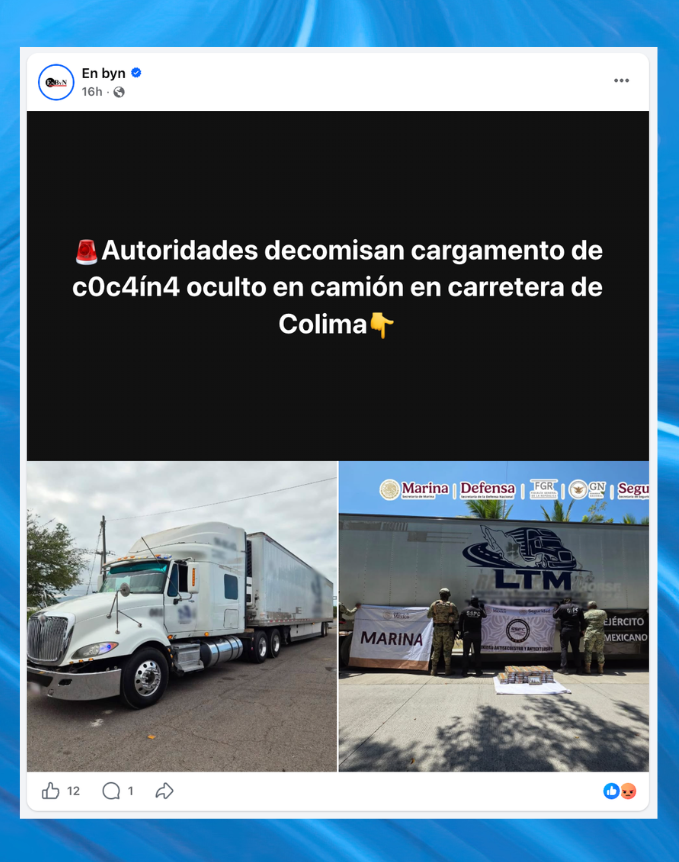

When the digital news outlet En Blanco y Negro uses altered words in headlines, traffic starts to climb. (Photo: En Blanco y Negro on Facebook)

“‘Ejecutar’ is the word we use most in Mexico to say that someone was shot to death,” Álvarez told LatAm Journalism Review (LJR). “What could we do to avoid putting ourselves at risk? We started using the word ‘desvivir’—which doesn’t exist; it’s not in the dictionary.”

The English word used for desvivir is “unalived.”

The team also began changing the spelling of words to an equivalent formed by letters, numbers and symbols. Thus, “ejecutar” became “3jecut4r,” “drogas” became “dr0g4s” and “narco” became “n@rcø.”

“When we start monitoring traffic in real time and see that it’s very low, we immediately change the keywords—or come up with new ones—using slang more specific to this region of Chihuahua; almost instantly, that same post sees its traffic start to climb,” Álvarez said.

The case from En Blanco y Negro illustrates a social media practice that is becoming increasingly widespread among media outlets: altering language to evade algorithmic content moderation. Known as "algospeak," this strategy seeks to maintain content visibility and avoid sanctions, although it can also alienate readers and compromise the clarity of the message.

“Algospeak”—derived from the terms “algorithm” and “speak”—consists of modifying, substituting or disguising words considered sensitive through euphemisms, spelling alterations or symbols.

Its use in the news is not an isolated phenomenon. Research by the Autonomous University of Chihuahua (UACH, for its Spanish initials)—published in September 2025 in the academic journal Doxa—documented how at least three digital media outlets in that city are adapting their language on Facebook to evade algorithmic moderation.

Based on an analysis of 312 headlines, the study—titled “When the Dead Become ‘Unalived’: Journalistic Algospeak as a Response to Digital Censorship”—found that journalists systematically resort to orthographic alterations, euphemisms and, to a lesser extent, symbols or metaphors, in order to publish news content—primarily concerning violence, deaths and security—without facing penalties from digital platforms.

“This is a novel presentation of a challenge that journalists have always faced—namely, evading censorship,” Mario Alberto Valdez—a journalist, UACH professor and one of the study’s authors—told LJR. “Now, we are viewing it through a technological lens.”

The research found that more than 90 percent of publications resorted to the orthographic alteration of sensitive words, followed by the use of lexical euphemisms—that is, words or expressions used to substitute others considered offensive. The majority of headlines with altered words were concentrated in publications regarding deaths, security and drug trafficking, with a lesser presence in content concerning drugs, sexuality and human abuse.

The fact that crime-related topics are the ones most heavily penalized by social media platforms poses a challenge for media outlets in states like Chihuahua, where this type of news is among the most consumed by the audience, Valdez said.

However, topics related to violence are not the only ones restricted by algorithms. Profiles that address gender issues must also resort to “algospeak” to ensure their content reaches their audience, according to separate research published in March 2026 by the Mexican media outlet La Cadera de Eva and the organization Article 19.

Sofía Márquez, a content creator and founder of We R Women On Fire—a Tijuana-based platform focused on feminism and gender-based violence—said that in many of her Instagram posts, she has to alter certain terms.

“The penalties range from completely removing content and revoking the use of certain tools—on one occasion, they blocked my ability to go live for six months—to the risk of having your account deleted entirely,” Márquez told LJR.

In her posts—which cover cases of violence against women and feminist movements—terms such as “v1olador,” “s3xual” and “f3minicid4” are used, both in text and in images.

“It is striking how informative, reflective and conscious content is consistently censored, while other content that replicates violence is disseminated with greater force within the patriarchal algorithm we see on social media,” Márquez said.

Between 2023 and 2025—when the migration crisis in Mexico saw several peaks—Meta’s platforms frequently penalized En Blanco y Negro’s content related to migration, Álvarez said.

We R Women On Fire, a platform covering feminist and gender issues, alters certain terms in its content to avoid penalties from social media platforms. (Photo: We R Women On Fire on Instagram and Canva)

“If you used the word ‘immigrant’ or ‘undocumented’ on Facebook, they could even hit you with a one-day ban on your account,” Álvarez said. “The notification would say, ‘You may be violating our policies regarding violence or discrimination.’ And we would think, ‘Of course not! I’m reporting the news!’”

It was then that the newsroom found an alternative in euphemisms. To avoid saying “immigrants,” they opted to use “people in a situation of mobility.”

At times, the euphemisms they chose bordered on sensationalism, Álvarez said. On one occasion, the outlet reported on the sexual abuse of minors in a marginalized region of Chihuahua.

They decided to use a euphemism: “Predator unleashes on 500 children in Punta Oriente.” Although some readers expressed outrage, Álvarez said, the media outlet chose to proceed in this manner to ensure the story would not be rendered invisible.

“Curiously enough, by being more sensationalist, Facebook actually did drive traffic to us,” Álvarez said. “It has to be that way, because we aren’t going to do any authority the favor of not reporting.”

Meta says on its website that its systems reduce the exposure of content that violates its Community Standards, “in order to minimize possible harm to our community.” This includes content related to graphic violence, hate speech, suicide, self-harm and fraud.

The company said that it permits this sensitive content if it is “of journalistic interest,” but first subjects it to a human rights-based analysis.

However, if a media outlet or journalist disagrees with a decision made by the platform, there is not much that can be done, Álvarez said.

“To get a problem resolved, you can submit an appeal, but everything is highly automated,” he said. “It’s almost impossible to get in touch with anyone.”

While “algospeak” helps disseminate information of public interest, it is also true that journalistic clarity may be compromised, according to research by the UACH.

“Young people immediately understand the rationale behind these strategies because they live it day in and day out,” Valdez said. “But we encountered profiles of older people who did not understand this way of handling information.”

Márquez said that, at times, followers of We R Women On Fire perceive the alteration of words as a lack of seriousness or as a way of softening the content.

“I always like to share the facts as they are—without hiding or sugarcoating anything—and when I censor words or images, there are people in the audience who question why I do it,” she said. “They assume it is because I don’t want to show the full reality.”

When readers of En Blanco y Negro expressed their discontent regarding the alteration of words and the use of euphemisms, the media outlet explained the reason in an article written by Álvarez in August 2025, clarifying that “algospeak” was a necessary strategy.

The article includes a glossary of 30 terms that the media outlet routinely modifies in areas including organized crime, deaths and sexual offenses.

“In a nutshell,” he said, “it’s not ignorance; it’s digital survival.”

En Blanco y Negro shared an image explaining why it uses “algospeak.” (Photo: Screenshot of enblancoynegro.com.mx and Canva)

This article was translated with AI assistance and reviewed by Teresa Mioli